The rampant copyright infringement of generative AI is in the DNA of the Internet

What happens when art becomes data to feed artificial intelligence?

Adobe recently came under fire when Creative Cloud users were notified about changes to the terms of service which were interpreted to mean that unpublished files would be scraped as training data for generative A.I.

Adobe later clarified that only published work on Adobe’s services, such as Adobe Stock which distributes stock photography, would be used for training their Firefly A.I. model, not local files or works in progress being edited in things like Photoshop or Substance Painter.

People took to social media to urge users to cancel their Creative Cloud subscriptions and to use alternatives to these applications to avoid having your intellectual property stolen by Adobe’s A.I., in the interim.

The short version of this story is that it was a misunderstanding, but it does bring attention to a serious lack of trust between creatives and software companies, especially in the A.I. space. How do we avoid having creative work stolen by A.I.?

How does A.I. end up stealing art?

It should go without saying that AI models don’t actually create anything from scratch, but just in case you weren’t aware here’s the basics of how it works:

A large dataset of images are fed into a neural network, which uses machine learning to identify patterns. Models like Midjourney also use generative A.I. “transformers” to convert a text prompt into an image. Basically, the text is analyzed and then the dataset of images is used to compose a new image. If the dataset didn’t include realistic landscape photos for example, the A.I. wouldn’t be able to produce a realistic landscape photo.

There’s already a class action lawsuit against Midjourney, Stable Diffusion and DreamUp, which are all image generators based on the same database, laion-5B. The suit alleges that the artists did not give consent to be included in these models, they were not compensated despite the fact that these A.I. services do collect revenue, and the artists were not credited in the final product.

Most A.I. models do not disclose the sources for its datasets. Vague terms are used such as “publicly available” or licensed images.

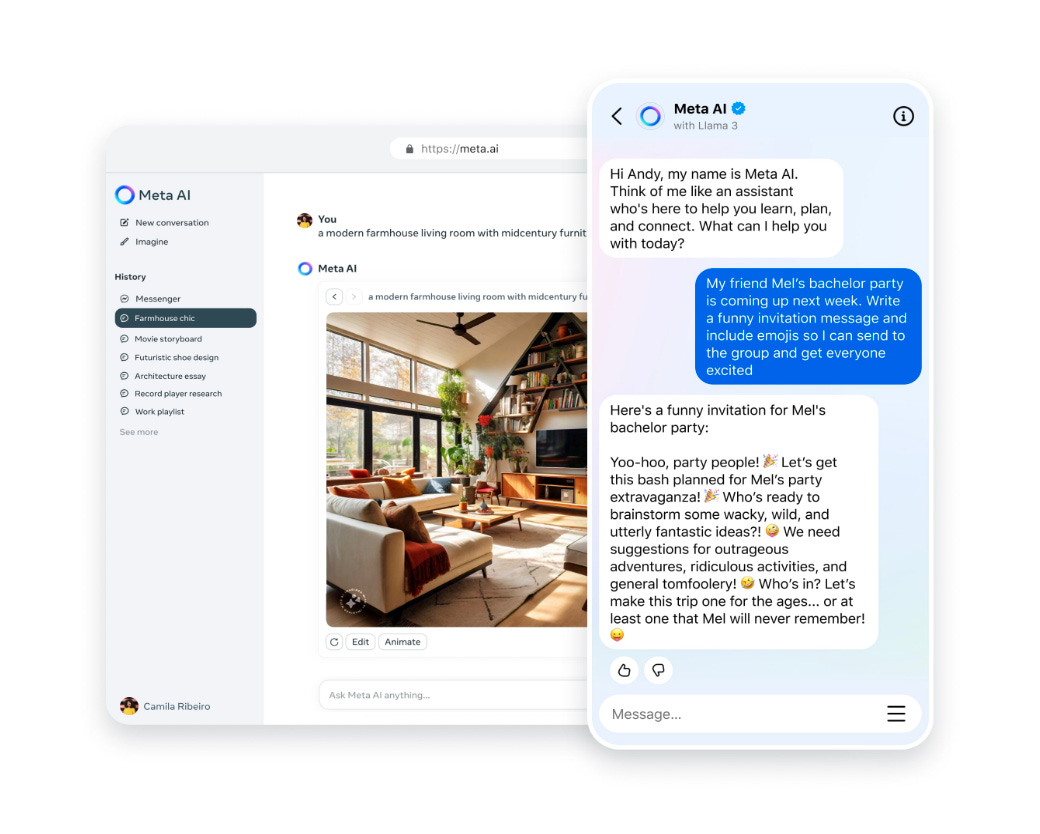

Meta recently pushed its own language and image generation A.I., Meta A.I., to Instagram, Messenger, and WhatsApp users. Meta similarly does not expand on where these images come from.

One of the big questions after Apple announced their own A.I. on Monday was “where did Apple source images for its generative model?” Apple quietly released this information right after WWDC, but remained vague when it comes to images. Apple’s model is apparently trained on “publicly available” images and publishers do have the option to opt-out but it’s not clear what public means.

Copyright infringement is baked into the Internet

I grew up in the early days of social media and content sharing. I remember things like The Facebook and even MySpace. Why do I bring this up? Because copyright infringement and intellectual property theft are part of the Internet’s DNA and we’ve known that for decades.

Look no further than the success of Napster and Limewire in the proliferation of “free” music, or websites like the Pirate Bay for the dissemination of pretty much anything else. Music, TV shows, movies, and even software was up for grabs.

It turns out that distributing a product for free that someone else sells for money is actually an infringement on their copyright and you can definitely be sued for it. Who knew?

Of course there were lawsuits and most of these services were eventually shut down and replaced with paid alternatives. iTunes became the record industry’s alternative to Napster, for example. There was a consensus online that if the corporations could come up with a legal alternative that was more convenient than piracy, we would use it. This is why services like Netflix and Spotify took off. Instead of searching for torrents which had the risk of trojan horse viruses and being sued by a record company, you could just pay $5 a month and stream whatever songs you wanted.

We collectively are so numb to copyright infringement online that most of us probably don’t even notice it. When you’re scrolling on TikTok and see someone dancing to the latest Kendrick Lamar diss track, do you stop and consider if Kendrick is getting royalties? Probably not. When you search up a clip of your favorite TV show on YouTube, do you care if it’s uploaded by an official YouTube channel or if the studio is being compensated for your viewership?

I like to think this indifference comes down to who content is being stolen from. A faceless record company or movie studio isn’t a very sympathetic victim. So what happens when corporations start stealing intellectual property from independent artists and individuals? In this case, people get upset and unsubscribe from the service.

There have been multiple controversies already about artists finding their work swept up in a generative model without their consent and without notification, including Adobe. And in most cases, they’re told they can opt-out after the fact with no compensation for what has already been scraped into the model.

This after-the-fact approach has been the M.O. of copyright infringement online from the beginning. Take for example services like YouTube, where a rights holder can flag content as infringing after it’s already been uploaded. We either do not have or refuse to use tools to prevent infringement before it’s shared.

Artists should continue to opt-out of having their work used to train these A.I. models and you can use a tool like haveibeentrained.com to see if your work is currently being used.

It’s actually ridiculous to me that the default in this industry is to opt out of having your art used to benefit these tech products. It should absolutely be opt-in instead.

As A.I. becomes more widespread and deeply integrated into the devices we use everyday from Apple, Google, Samsung, and others, we should be demanding transparency. Legislatively we can demand transparency as to where this data is sourced from and judicially we may need the courts to step in and define whether these tools are generating new, transformative works or derivative works that infringe on copyright when done incorrectly.

For now, it’s the wild west.